Maximizing Productivity with AI Coding Agents

The AI Coding Agent Revolution

AI coding agents have evolved far beyond autocomplete. Today's tools can autonomously implement features, debug complex issues, and handle entire workflows while you focus on higher-level work. But here's the thing: having access to powerful tools doesn't automatically make you productive. The difference between "AI helps sometimes" and "AI makes me 3x more effective" comes down to how you set up your workflows and build institutional knowledge.

This guide covers the practical side of AI-assisted development: understanding the tool landscape, setting up efficient workflows, and building team-wide capabilities that compound over time.

Understanding the AI Coding Agent Landscape

Modern AI coding agents generally fall into two categories, each suited to different types of work:

Autonomous Agents

Work independently on well-defined tasks. You assign work, they execute, you review later.

- •Devin: Full-stack autonomous agent for complex features

- •Cursor Background Agent: Cloud-based task execution

- •Linear + Cursor: Assign issues directly to Cursor's agent via @cursor

- •GitHub Copilot Workspace: Issue-to-PR automation

Collaborative Agents

Work alongside you in real-time. Best for exploration, debugging, and complex problem-solving.

- •Claude Code: Terminal-based pair programming

- •Cursor Composer: Multi-file editing with context

- •Windsurf Cascade: Agentic IDE workflows

Understanding which type of agent to use for which task is crucial. If you haven't already, check out my blog post on delegating vs leveraging for a framework on matching tasks to the right workflow.

Setting Up Your AI Workflow

Cloud-Based Agents for Delegation

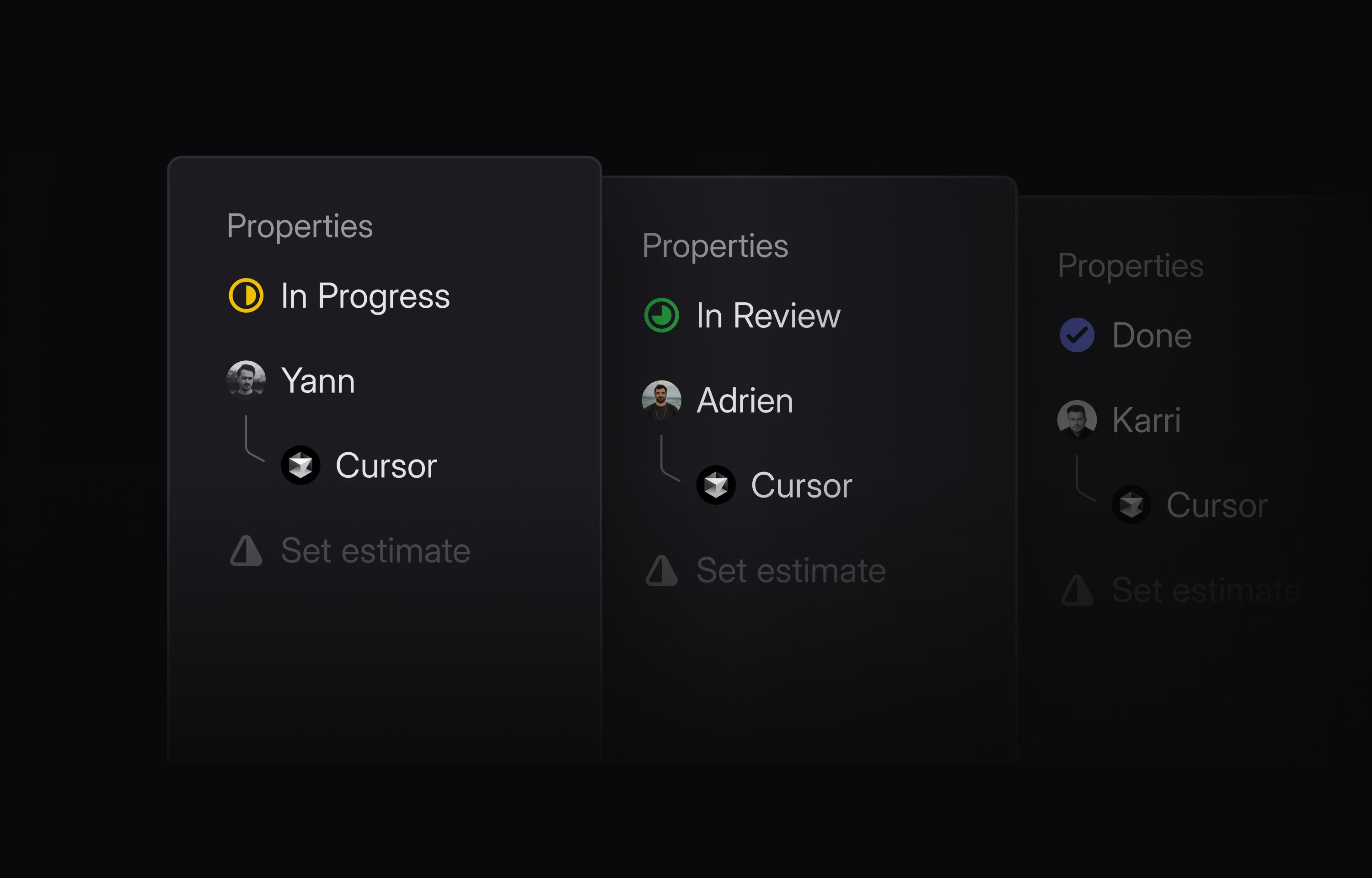

Modern AI coding tools offer autonomous agent capabilities that can work on tasks independently while you focus on other things. The workflow is seamless: create a task in your project management tool, connect it to your AI coding assistant (many tools offer integrations with Linear, GitHub Issues, or Jira), assign it to an agent, and let it work autonomously.

For example, we use cloud-based AI agents that can handle delegated tasks in the background. The key is having a clear handoff process. The agent needs to know exactly what to do, where to find relevant code, and what success looks like.

Cloud-based AI agents can handle delegated tasks autonomously

Project Management Integrations

The real power of autonomous agents comes from project management integrations that let you delegate directly from your issue tracker. The Linear + Cursor integration is a great example: you can mention @cursor in any Linear issue comment or select Cursor from the assignee menu, and the cloud agent automatically picks up the task, works on it, and creates a PR when done, all while keeping Linear updated with progress.

In our team, we've found a pretty convenient workflow (not an endorsement, just what works for us): when non-engineers flag an issue or bug in Slack, they can use @linear create a ticket based on this contextto instantly create a properly formatted ticket with all the relevant discussion. Then we just assign it to @cursor and let it handle the investigation and fix. It's seamless because everything stays in the tools we're already using, with no context switching required.

Setting Up an Effective Delegation Pipeline:

- 1.Issue Creation: Use Slack integrations or voice-to-text tools to quickly capture tasks with full context.

- 2.Task Enrichment: Add code references, examples, and success criteria before assigning to an agent.

- 3.Agent Assignment: Match the task complexity to the right agent capability.

- 4.Batch Review: Schedule time to review completed tasks together rather than context-switching throughout the day.

Context Is Everything: The agents.md Pattern

One thing I see people struggle with is giving AI the right context about their codebase. You can't just say "update the context" and expect your AI to know what you mean. Is it React Context? Some other state management? A feature flag?

Here's what works: create a documentation file (like agents.md or .ai-context.md) in your repo that explains your codebase patterns. Not the whole architecture diagram. Just the stuff that's confusing or non-obvious:

# Codebase Patterns for AI Agents

## State Management

- **CLS Store**: Our custom store in src/store/cls-store.ts

- Used for conversation-level state

- Accessible via useCLSStore() hook

- **React Context**: Only for theme and auth

- ThemeContext in src/contexts/theme

- AuthContext in src/contexts/auth

## Feature Flags

- Managed via config/features.ts

- Check flags with useFeature('FLAG_NAME')

- NEVER check feature flags in server-side loaders

## Common Gotchas

- "Context" usually means CLS Store, not React Context

- All API calls go through src/lib/api-client.ts

- Database queries must use the transaction wrapper

## Testing Patterns

- Unit tests use vitest with @testing-library/react

- E2E tests use Playwright

- Mock external APIs with MSW handlers in tests/mocks/Now when you tell your AI "update the context to include user preferences," it knows exactly what you mean. No more back-and-forth clarifying which "context" you're talking about. This single file has probably saved our team hours of miscommunication.

What to Include in Your agents.md

Include ✓

- • Non-obvious naming conventions

- • Custom abstractions and their purposes

- • Common gotchas and pitfalls

- • File organization patterns

- • Testing conventions

- • API client usage patterns

- • State management approach

Skip ✗

- • Full architecture diagrams

- • Complete API documentation

- • Obvious patterns (standard React, etc.)

- • Duplicating existing docs

- • Implementation details that change often

Advanced Productivity Patterns

Codifying Your Debugging Process

You can codify your systematic debugging workflows into reusable patterns. Think about it: when you're hunting for a bug, you probably follow the same process every time. Check recent git commits, look at related files, trace the data flow, check the tests, etc.

Instead of manually guiding your AI through this process every time, you can create a documented workflow or custom instruction set. Now when you say "help me debug this checkout issue," your AI assistant automatically knows to:

- Check recent commits in the checkout-related files

- Look for changes in payment processing logic

- Review error logs and test failures

- Trace the user flow through the code

- Check for timing/race conditions

The agent works autonomously through your systematic debugging process and comes back with either "found the bug" or "didn't find the bug, here's what I checked." Either way, you saved 30 minutes of manual investigation. Many AI coding tools support custom instructions, project-level prompts, or skill systems to encode these workflows. Check out my blog post on turning expertise into reusable workflows to learn more.

Creating Reusable Task Templates

For tasks you do repeatedly, create specification templates that capture your requirements:

## Feature Flag Removal Template **Task:** Remove [FLAG_NAME] feature flag **Context:** The [FLAG_NAME] feature flag has been enabled in production for [X] weeks with no issues. Time to clean up the old code path. **Requirements:** 1. Remove flag definition from config/features.ts 2. Remove all conditional checks for [FLAG_NAME] 3. Keep the [new/old] code path 4. Delete deprecated components: [list] 5. Rename components (remove V2 suffix if applicable) 6. Update all imports throughout the codebase 7. Update tests to remove feature flag mocking **Success Criteria:** - No references to [FLAG_NAME] remain - All tests pass - Application builds without warnings

Voice-to-Text for Faster Specs

One productivity hack that's been game-changing: use voice-to-text tools like Super Whisper or the built-in dictation on your OS to quickly capture task specifications. It's much faster to describe what you need verbally than to type it out, especially for detailed requirements.

Speak your requirements, let the tool transcribe them, then quickly clean up the text before creating your task. This alone has probably doubled my delegation throughput.

Building Team Capabilities

Okay, so you've figured out how to be productive with AI. That's great for you. But if you're on a team, the real win is getting everyone else up to speed too. And that means documenting what works and what doesn't.

Expanding the agents.md Pattern

That agents.md file for context patterns? That's just the start. Turn it into a living document that captures everything your team learns about working with AI:

- Failure Patterns: "Don't ask AI to refactor auth logic, it always misses edge cases"

- Success Templates: Proven task specifications that work reliably

- Context Guidelines: How to reference your specific codebase patterns

- Agent Strengths: "Use Devin for full features, Claude Code for debugging, Cursor for quick edits"

Keep this in Notion or your wiki. Make it searchable. Update it when you learn something new. This is how you go from "some people on the team use AI sometimes" to "everyone on the team is 3x more productive."

Team Workflow Evolution

Maturity Progression

Stage 1: Individual Adoption

└─ Engineers experiment with AI tools

└─ Ad-hoc usage patterns

└─ Inconsistent results

Stage 2: Pattern Recognition

└─ Team identifies what works

└─ Document successful approaches

└─ Share learnings informally

Stage 3: Systematic Practice

└─ Establish delegation/leverage framework

└─ Create specification templates

└─ Formal knowledge sharing

Stage 4: Institutional Knowledge

└─ AI workflows become default

└─ Onboarding includes AI patterns

└─ Continuous improvement cultureOnboarding New Team Members

When bringing new engineers onto a team with established AI workflows, include these in their onboarding:

- 1.Tool Setup: Which AI tools the team uses and how to configure them

- 2.agents.md Walkthrough: Review the codebase context document

- 3.Template Library: Show them existing task templates and when to use them

- 4.Pairing Session: Watch an experienced team member use AI for a real task

- 5.First Delegated Task: Guide them through delegating their first task with feedback

Measuring Success

How do you know if your AI workflows are actually working? Here are some signals to watch:

Positive Signals

- ✓Delegated tasks complete with 0-1 review loops

- ✓Team members share successful specs in Slack

- ✓agents.md gets updated regularly

- ✓New hires adopt AI workflows within first week

- ✓PR velocity increases without quality decrease

Warning Signs

- ✗Tasks require 3+ review loops regularly

- ✗Team members avoid AI for "important" work

- ✗AI-generated code causes production issues

- ✗Only one or two people use AI effectively

- ✗No documentation of what works/doesn't work

Key Takeaways

- 1. Understand the Tool Landscape: Match autonomous agents to well-defined tasks, collaborative agents to exploration and complex problems.

- 2. Invest in Workflow Setup: Project management integrations and streamlined pipelines pay dividends in reduced friction.

- 3. Create an agents.md File: Document non-obvious patterns, gotchas, and conventions to give AI the context it needs.

- 4. Codify Repeatable Workflows: Turn debugging processes and common tasks into reusable templates and custom instructions.

- 5. Build Team Knowledge: Document successes and failures, share learnings, include AI workflows in onboarding.

- 6. Measure and Iterate: Watch for positive and negative signals, continuously improve your workflows based on what you learn.

Wrapping Up

The teams that will thrive in the AI era aren't just the ones with access to the best tools. They're the ones that build systems around those tools. Creating an agents.md file takes an hour. Setting up project management integrations takes an afternoon. Building a culture of sharing AI learnings takes consistent effort but pays off exponentially.

Start small. Create your first agents.md file today. Write one task template for something you do often. Share what works with your team. These small investments compound into massive productivity gains over time.

The goal isn't just to make yourself more productive. It's to make your entire team unstoppable.